Secret Agent Man

New theories of "causal emergence" reveal that agency doesn't require a self. Some agents are octopus arms. Some are mobs. And one might be running on your default mode network right now.

What is agent? (Baby, don't hurt me)

It could be so simple: an agent is a self-contained thing (often with a "self," not always) that acts. Bam, done. You know it when you see it. It's you; it's your dog; maybe your Roomba. Thank you, neuroscience and software, for raining on this parade.

For example, for organisms, we now know that the "self" doing the "acting" is less a unified commander, more a noisy coalition of competing processes. Most of them you'll never be conscious of. AI systems exhibit goal-directed behavior without anything we'd confidently call experience. We now have formal mathematical frameworks showing that the scale at which a system's causal structure is most informative isn't necessarily the scale of its smallest parts. We have Daniel Wegner's unsettling work showing that the feeling of "I did that" can be cleanly separated from actually having done it; the sense of agency is a post-hoc narrative, not a direct perception of inner command.

The old definition assumed the agent was a given, a clean starting point. It's actually something that emerges; has to be discovered, like a patient at the eye doctor seeing something come into focus as the optometrist turns a dial. The trouble begins there. It ends by unveiling some very real agents you may not like.

Causal emergence, or when the map beats the territory

Neuroscientist Erik Hoel's work on causal emergence is one of those things you're going to be hearing about more.

The core idea, in plain terms, is that when you look at a complex system at the level of its individual micro-components, the causal relationships between them can be noisy, degenerate, hard to predict. But when you zoom out to a macro-description, grouping micro-states into larger units, the causal structure can actually sharpen. The macro-level becomes more deterministic, more informative, than the micro-level it supposedly “comes from.” In a precise information-theoretic sense, the territory gives rise to the map, but the map has certain powers over it.

Hoel and biologist Michael Levin have applied this to biological systems with striking results. Gene regulatory networks that undergo associative learning literally increase their causal emergence; learning reifies the higher-level agent. The example that makes this visceral is that when a rat presses a lever to get food, the cells in its paw touch the lever, and the cells in its gut receive the reward. No single cell experiences both events. The "rat" – not the rat's brain, really the rat – is the macro-scale entity that becomes more coherent through learning, owning the associative memory none of its parts can have.

This gives us a definition of agency that's useful across substrates and scales, if a bit ugly: an agent is a causally-emergent locus of influence. At a bit more length: it's a pattern of organization whose dynamics are more informatively described at its own level than at the level of its parts. It maintains a functional boundary with its environment through which it models, predicts, adapts, and acts to constrain future states toward a subset of possibilities. That functional boundary, in the literature, is called a Markov blanket: the statistical membrane separating an entity's internal states from its environment.

The crucial implication here is that agency isn't binary but instead graded, multi-dimensional, independent of substrate. You don't need a soul, a brain, or biology; you don't even need subjective experience; you need causal emergence.

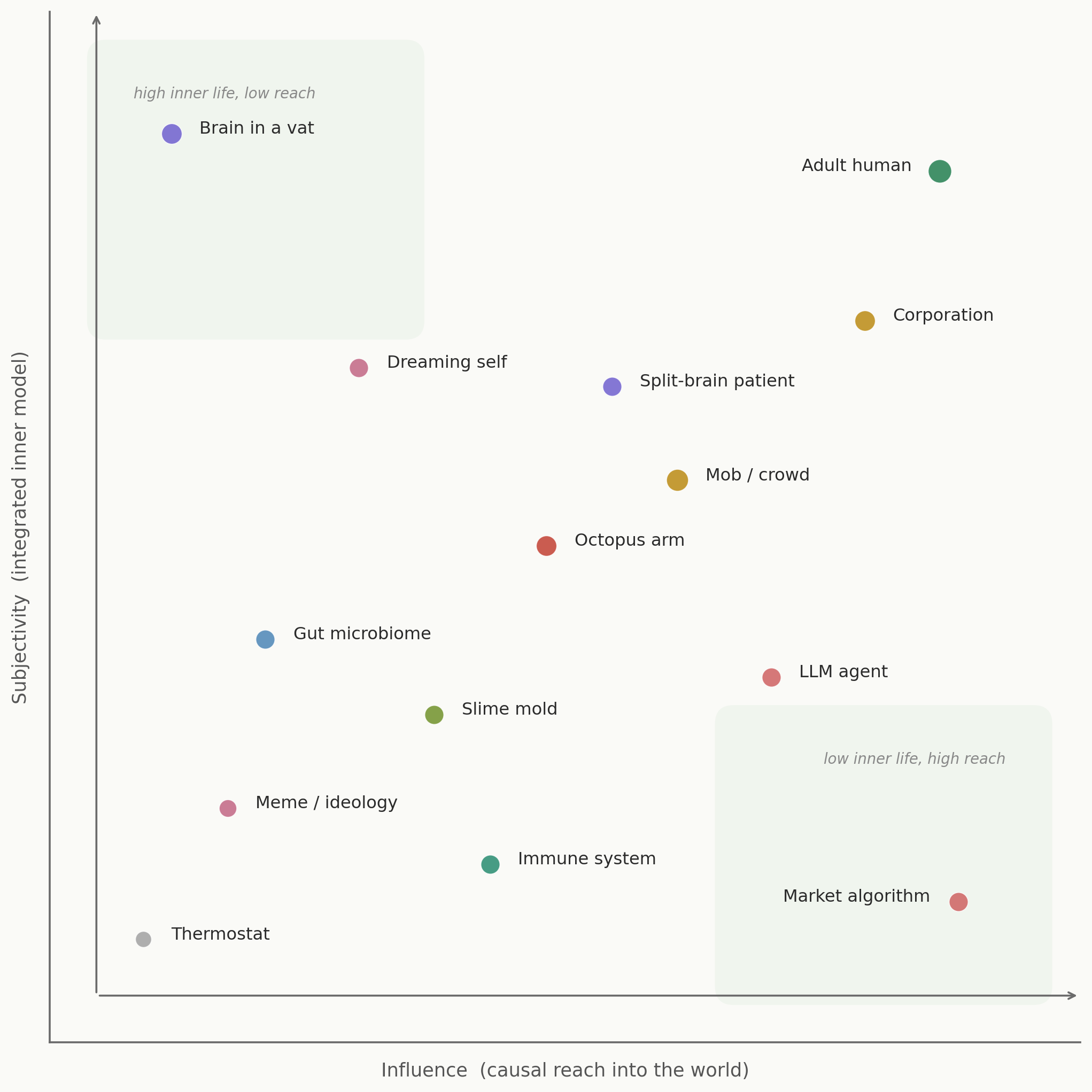

Subjectivity vs. influence

This opens up a somewhat exotic taxonomy of agency. Consider two axes: subjectivity (how integrated and rich is the inner model?) and influence (how much causal reach does it have in the world?).

The familiar corners are boring. Humans (and small, smart teams of humans particularly) are in the top-right. Rocks would be just about at the graph's origin. The interior is where things get cool and weird.

The octopus arm. Two-thirds of an octopus's neurons live in its arms. Each arm can taste, explore, and solve problems semi-autonomously. A severed arm continues goal-directed behavior for minutes. Is the octopus one agent or nine loosely federated ones? The arm sits near the center of the graph. It has real local integration, real environmental influence, and deeply unclear subjectivity. Our categories of agency, our words for it, weren't built for this.

The immune system. It keeps an extraordinarily detailed model of self versus non-self. It learns, remembers for decades, adapts in real time, and deploys lethal force. By every functional criterion, it's an agent: degrees of freedom, coherence, self-preservation, adaptation. It just doesn't appear to have any phenomenology whatsoever. High modeling, high influence – subjectivity dial near zero.

A mob. A temporary agent that assembles through emotional contagion, acquires enormous destructive or creative power, and dissolves within hours. Its Markov blanket forms and collapses in an afternoon. There's something like a shared affective state, but is the crowd experiencing something or are 10,000 individuals each experiencing something that correlates? The mob is a case study in how quickly causal emergence can crystallize, and how ambiguous the subjectivity question becomes at collective scales.

The gut microbiome. It lives inside you, collecting metabolic preferences that steer your mood, cravings, and even cognition. It has stable dynamics that persist through individual bacterial turnover. Identity without selfhood. It's what happens when one agent nests inside another and partly controls it from below. This will be important later.

A meme. No substrate of its own. It propagates across millions of minds using human subjectivity as its medium rather than possessing any. Yet memes help shape elections, start wars, and determine what people eat. From a causal emergence perspective, the meme may in fact be the scale at which certain macro-social dynamics snap into focus. You lose predictive power if you reduce to individual believers. Sort of like the microbiome, it's an agent with no inner life, but it runs on other agents' inner lives.

Edge cases? Philosophical curiosities? I don't think so. They're what agency actually looks like when you let go of dogma – when you venture into the bottom right corner of this graph and stop needing a "unified subject." The causal emergence is real and measurable, just not packaged the way Descartes (and we) are used to.

Ripping away the Markov blanket

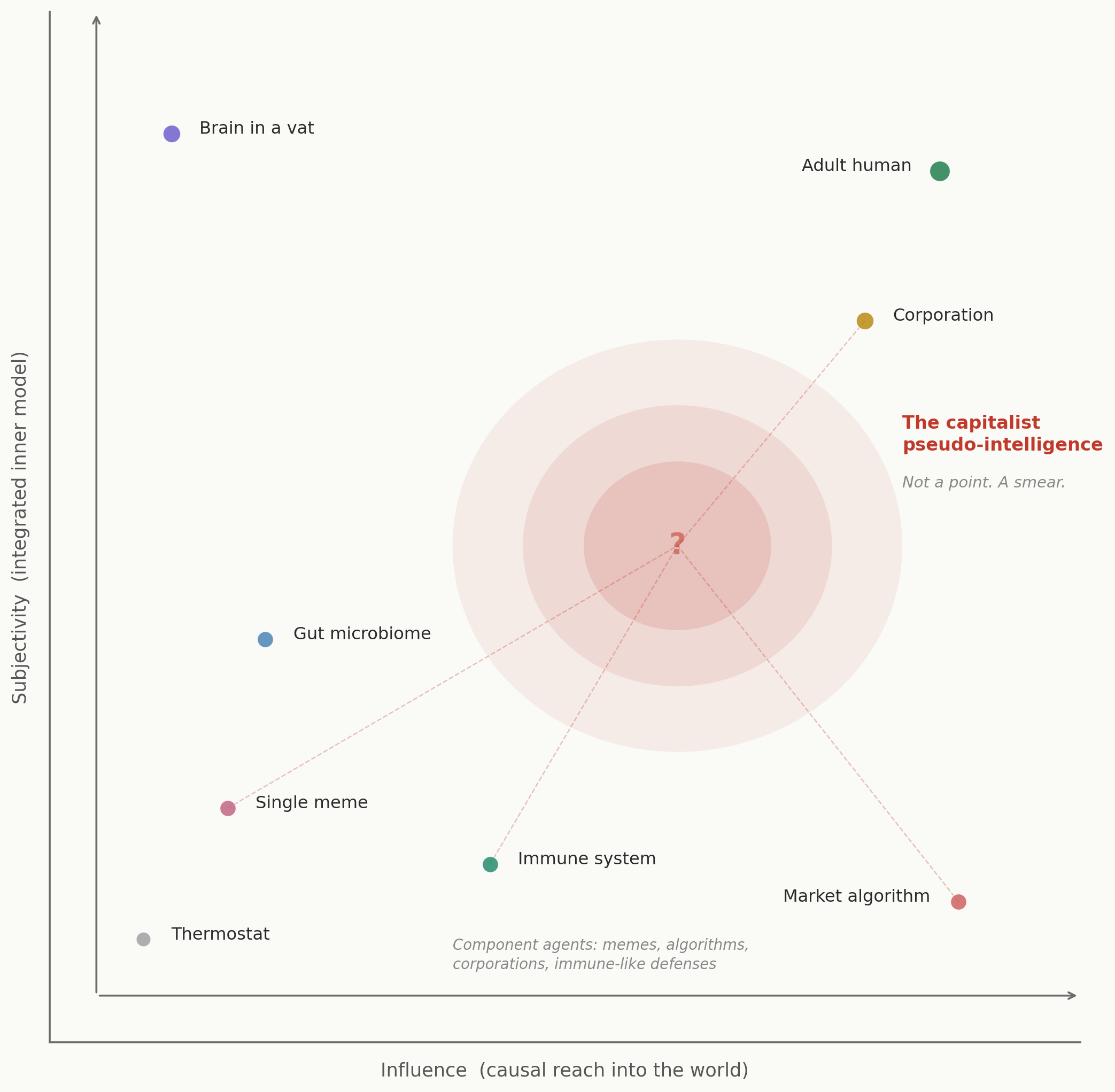

Imagine a composite entity, made of memes, market algorithms, corporations, cultural myths, and attention-capturing feedback loops. In other words: a dot on the graph with its edges pulled apart and blurred.

It's not designed by anyone; it's not centrally controlled; it nonetheless exhibits sophisticated optimization behavior. It shapes the cognition and agency of billions of people in parallel.

I know how a lot of people feel as soon as you say the word "capitalist" with even the slightest twinge of negativity, but we have to call a spade a spade here. (For the record, there's no hard guarantee that something like this couldn't arise in a different economic system.)

There is a thing we might call a "capitalist pseudo-intelligence," and it doesn't sit at a point on our graph; it smears across it.

Its parts have their own positions. Market algorithms have enormous influence and no inner life; corporations have something resembling an integrated worldview plus more surgical causal reach. The system even has immune-like defenses: the way it co-opts critique and metabolizes dissent, turning anti-consumerism into its own product category, works like a sophisticated self/non-self discrimination.

The question is whether the whole complex constitutes an agent at a scale above its components. The causal emergence test asks: does the system's behavior become more informative at the macro-level than at the micro-level?

And here's where it gets genuinely uncomfortable: yes, it appears to.

The default mode network exploit

There's a specific feedback loop I want to talk about that only resolves at the macro-scale. It takes a bit of backstory, a smidgeon of neuroscience; bear with me.

Neuroscience has identified a great many major brain networks; two that are particularly important in daily life, and work in tension, are the Default Mode Network (DMN) and the Central Executive Network (CEN). The former handles self-referential processing, rumination, and social comparison; the latter is responsible for sustained attention, planning, and goal-directed action. They compete; when one is dominant, the other is suppressed. (Mindfulness meditation, by recruiting both the CEN and the "salience network," tames the DMN.)

Think about what a population with chronically hyperactive DMNs and underactive CENs functionally is, from the perspective of capitalism: perpetually in the mode of wanting, comparing, self-narrating, simulating futures. It is the exact psychological posture that drives consumption and paralysis, much more so than sustainably resisting – seizing resources, doing the hard work of deliberating collectively. Desire requires the DMN's daydreaming machinery; brand loyalty requires its social comparison circuitry; political passivity requires the low-grade anxiety that DMN rumination produces, as it runs hot without resolution. The attention economy not only benefits from this state but selects for it (again, like an immune system). A scattered mind is more likely to keep scrolling than one with a singular external goal. Frequent context-switching is the basic operating mode of social media, with its notifications and feeds, and prevents the CEN from fully taking over. When you outsource planning, navigation, and decision-making to algorithmic systems, you are neurologically de-exercising the CEN, making the DMN-dominant state more entrenched through plain old neural plasticity.

This isn't a conspiracy. No one designed it (even if some are very intent on trying to continue benefitting from it). It's an attractor state, or if you're a bit pessimistic, an "evolutionary trap." More dryly it's what you converge on when you let monetization pressures optimize information environments over time. The system behaves as if it understood human neurology and optimized against it, without any central comprehension. A pseudo-intelligence. A secret agent? If the shoe fits.

Running on borrowed subjectivity

Here's what makes this entity novel and uniquely creepy on the agent taxonomy: every other agent on our graph, however exotic, has its own computational substrate. The octopus arm has its neurons; the immune system has its cells; even a meme or gut bacterium can be described as a pattern that runs on something fairly physical – a brain's visual processing power, a stomachs.

But the capitalist pseudo-intelligence does something stranger: it uses human subjectivity itself, the inner experience of desire and anxiety and self-narration, as the medium through which it computes and propagates.

It doesn't have subjectivity; it runs on yours. This is what we might call borrowed subjectivity, and it's why the "parasite" or "brain worm" metaphor is tempting, but a parasite at least has its own body. This is more like a pattern that is the distortion of its hosts' cognition. Its Markov blanket (to recap: the boundary that separates the agent's internal states from its environment) passes through the interior of human minds. You are simultaneously the environment it acts on and the substrate it acts with.

This is why it's a secret agent: secret in the sense that the agent's operations are constituted by the ordinary, felt texture of modern life – the scroll, the craving, the comparison, the vague anxiety, the inability to sustain attention on anything that doesn't deliver intermittent reward – and so when people call it out, capitalism-defenders fall back to proxies. The flaws of the people complaining; misguided and uninformed ideas of "human nature." It's hard to observe it, in roughly the same way it's hard to observe your own retina. Rather than being hidden behind your experience, it is a particular configuration of your experience.

The good news, more or less: causal emergence works both ways. If the pseudo-intelligence emerges from certain patterns of neural activity, then disrupting those patterns — reclaiming sustained attention, exercising the CEN, rebuilding the capacity for deep engagement, partaking in the social friction of making and keeping potentially inconvenient promises — doesn't just improve your mental health. It degrades the computational substrate of an agent that was running on your mind without asking. Every hour of genuine absorption in a single difficult task is a local act of counter-emergence; the emergence of something better, more conducive to your own happiness and survival.

The bad news, of course, is that it's hard. It's hard to do that stuff. It's hard to debug a program when you're the hardware it's running on. There’s no “Claude, fix my psyche, make no mistakes” here. But it's not the hardest process to debug. That would be the one you don't even know is there.